In the Baker Encyclopedia of Christian Apologetics, by Norm Geisler (Grand Rapids: Baker, 1998; p. 532), there is a comment about the number of textual variants among New Testament manuscripts:

“Some have estimated there are about 200,000 of them. First of all, these are not ‘errors’ but variant readings, the vast majority of which are strictly grammatical. Second, these readings are spread throughout more than 5300 manuscripts, so that a variant spelling of one letter of one word in one verse in 2000 manuscripts is counted as 2000 ‘errors.'”

There are several problems with this paragraph, one of which is this: to say that variant readings are not errors is an odd way of putting things. If the primary goal of NT textual criticism is to recover the wording of the autographa (i.e., the texts as they left the apostles’ hands), then any deviation from that wording is, indeed, an error. It may well be a rather minor error (as the vast majority of them are)—in fact, something that cannot even translated it is so trivial—but it is an error nevertheless. The author, however, is most likely equating error with some reading that would render the Bible errant and fallible. It is quite true that (virtually) no viable variants are major threats to inerrancy; the major problems that the doctrine of inerrancy faces are essentially never found in textually disputed passages in which one reading creates the problem and another erases it.

The larger issue, however is how the number of variants was arrived at. Geisler got his information (directly or indirectly) from Neil R. Lightfoot’s How We Got the Bible (Grand Rapids: Baker, 1963), a book now fifty years old. Lightfoot says (53-54):

“From one point of view it may be said that there are 200,000 scribal errors in the manuscripts, but it is wholly misleading and untrue to say that there are 200,000 errors in the text of the New Testament. This large number is gained by counting all the variations in all of the manuscripts (about 4,500). This means that if, for example, one word is misspelled in 4,000 different manuscripts, it amounts to 4,000 ‘errors.’ Actually in a case of this kind only one slight error has been made and it has been copied 4,000 times. But this is the procedure which is followed in arriving at the large number of 200,000 ‘errors.'”

In other words, Lightfoot was claiming that textual variants are counted by the number of manuscripts that support such variants, rather than by the wording of the variants. His method was to count the number of manuscripts times the wording error. This book has been widely influential in evangelical circles. I believe over a million copies of it have been sold. And this particular definition of textual variants has found its way into countless apologetic works.

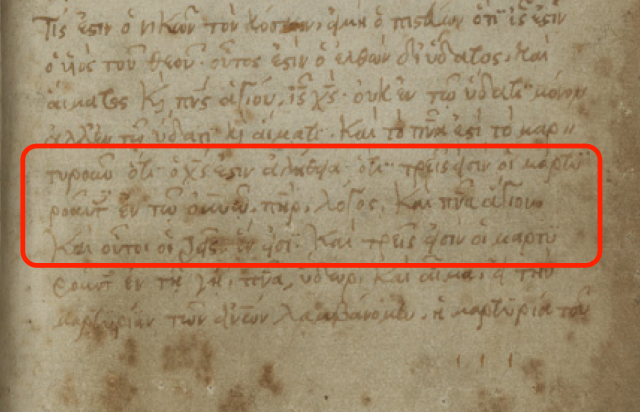

The problem is, the definition is wrong. Terribly wrong. A textual variant is simply any difference from a standard text (e.g., a printed text, a particular manuscript, etc.) that involves spelling, word order, omission, addition, substitution, or a total rewrite of the text. No textual critic defines a textual variant the way that Lightfoot and those who have followed him have done. Yet, the number of textual variants comes from textual critics. Shouldn’t they be the ones to define what this means since they’re the ones doing the counting?

Let me demonstrate how Lightfoot’s definition is way off. Today we know of more than 5600 Greek NT manuscripts. Among these, we know of about 2000–3000 Gospels manuscripts, 800 Pauline manuscripts, 700 manuscripts of Acts and the general letters, and about 325 manuscripts of Revelation. These numbers do not include the lectionaries, over 2000 of them, that are mostly of the Gospels. At the same time, not all the manuscripts are complete copies. The earlier manuscripts are fragmentary, sometimes covering only a few verses. The later manuscripts, however, generally include at least all four Gospels or Acts and the general letters or Paul’s letters or Revelation. But an average estimate is that for any given textual problem (more in the Gospels, less elsewhere), there are a thousand Greek manuscripts (this assumes that less than 20% of all the Greek manuscripts “read” in any given passage, probably a conservative estimate).

Putting all this together, we can assume an average of 1000 Greek manuscripts being involved in any textual problem. Now, assume that we start with the modern critical text of the Greek New Testament (the Nestle-Aland28). Most today would say that that text is based largely on a minority of manuscripts that constitute no more than 20% (a generous estimate) of all manuscripts. So, on average, if there are 1000 manuscripts that have a particular verse, the Nestle-Aland text is supported by 200 of them. This would mean that for every textual problem, the variant(s) is/are found in an average of 800 manuscripts. But, in reality, the wording of the Nestle-Aland text is often found in the majority of manuscripts. So, we need a more precise way to define things. That has been provided for us in The Greek New Testament according to the Majority Text by Hodges and Farstad. They listed in the footnotes all the places where the majority of manuscripts disagreed with the Nestle-Aland text. The total came to 6577.

OK, so now we have enough data to make some general estimates. Even if we assumed that these 6577 places were the only textual problems in the New Testament (a demonstrably false assumption, by the way), the definition of Lightfoot could be shown to be palpably false. 6577 x 800 = 5,261,600. That’s more than five million, just in case you didn’t notice all the commas. Based on Lightfoot’s definition of textual variants, this is how many we would actually have, conservatively estimated. Obviously, that’s a far cry from 200,000!

Or, to put this another way: this errant definition requires that there be no more than about 250 textual problems in the whole New Testament (250 textual variants x 800 manuscripts that disagree with the printed text = 200,000). (It should be noted that, for simplicity’s sake, I am counting a textual problem as having only one variant from the base text, even though this is frequently not the case). If that is the case, how can the United Bible Societies’ Greek New Testament list over 1400 textual problems? And how can the Nestle-Aland text list over 10,000?

And again, this five million is not even close to the actual number. I took a very conservative approach by only looking at the differences from the majority of manuscripts. But if one started as his base text Codex Bezae for the Gospels and Acts and Codex Claromontanus for the letters, the number of variants (counted Lightfoot’s way) from these two would be astronomical. My guess is that it would be well over 20 million. Or if one started with Codex Sinaiticus, the only complete New Testament written with capital (or uncial) letters, the numbers would probably exceed 30 million—largely because Sinaiticus spells words in some strange ways that are not shared by very many other manuscripts. You can see that the definition of a textual variant as a combination of wording differences times manuscripts is rather faulty. Counting this way results in tens of millions of textual variants, when the actual number is miniscule by comparison. And that’s because we only count differences in wording, regardless of how many manuscripts attest to it.

All this is to say: a variant is simply the difference in wording found in a single manuscript or a group of manuscripts (either way, it’s still only one variant) that disagrees with a base text. Further, there aren’t only 200,000. That may have been the best estimate in 1963, when we knew of fewer manuscripts. But with the work done on Luke’s Gospel by the International Greek New Testament Project, Tommy Wasserman’s work on Jude, and Münster’s work on James and 1-2 Peter, the estimates today are closer to 400,000. Some even claim half a million. In short, as Bart Ehrman has so eloquently yet simply put it, there are more variants among the manuscripts than there are words in the NT.

Although this may leave some feeling uneasy, it is imperative that Christians and non-Christians be honest with the data. I would urge those who have used Lightfoot’s errant definition to abandon it. It’s demonstrably wrong, and citing it reveals a fundamental ignorance about textual criticism. And I would hope that the publishers of numerous apologetics books would get the data right. The last thing that Christians should be doing is to latch on to some spurious ‘fact’ in defense of the faith.

Postscript

I have recently been in correspondence with some apologists (including Geisler), and I am happy to report that they are revising their definition of what constitutes a textual variant. Two or three of them have appealed to their publishers to correct the data in later printings.