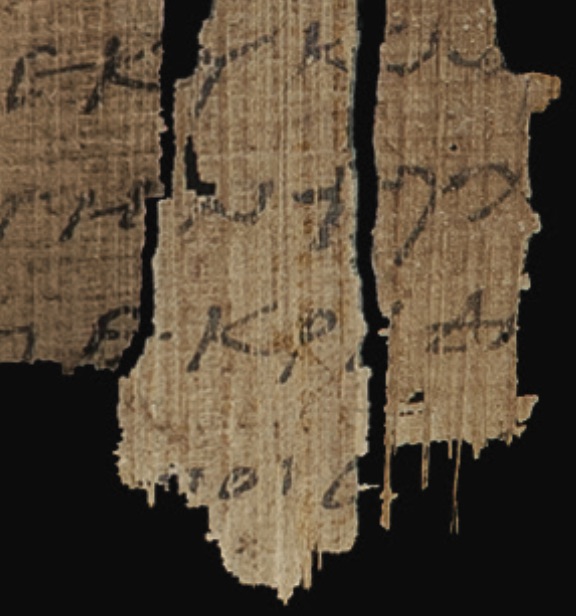

If you could stack up all handwritten manuscripts of the New Testament—Greek, Syriac, Latin, Coptic, all languages—how tall would the stack be?

I was recently challenged on my numbers in a Facebook discussion in the group “New Testament Textual Criticism.”

I have said in many lectures that it would be the equivalent of c. 4 & 1/2 Empire State Buildings stacked on top of each other. How did I come up with that number?

First, I needed to figure out the total number of pages of all our Greek NT manuscripts. Ancillary to that, but helpful for estimating versional witnesses, is determining the average number of pages in the manuscripts. For Greek NT MSS, my wife did the addition. When I was on sabbatical in Germany in 2002–03, I spent a few months in Tübingen (after several months in Münster). Pati added up all the leaf counts of Greek NT MSS listed in the 1994 Kurzgefaßte Liste. Then, she doubled it to get the page count. The average-sized MS was well over 400 pages long. Total number was something like 2.5 million pages. Of course, now we have the online K-Liste, and quite a few more MSS on the list, but the ’94 was the latest available at the time. Keep in mind that the folio count of those MSS that had been mislabeled were not listed in the 1994 K-Liste, although the official number of MSS was (which was higher than the actual number by a couple hundred or so). Today, the official number is, at last count, 5999. The actual number, I believe, is closer to 5800.[1] Many other researchers suggest the actual number to be about 5500, but I have reason to believe it’s higher than this. Regardless, all our numbers are approximations, but we do need a ballpark figure to give us some sort of a benchmark.

Next, I needed to estimate the thickness of the average MS. This was trickier to do and could only be an estimate since the depth of MSS is almost never measured in any metadata write-ups. But CSNTM has been measuring depth on all MSS since 2015. I had previously guesstimated 3.5” thickness per average MS. After sampling twenty MSS at the National Library of Greece, whose average number of leaves came to 239, the depth came out to 2.9″ (that’s a shortfall of 6/10th of an inch from my original guess).

Taking the average of 2.9″ per MS above as depth (including covers, which I estimated at c. 1/2″; these I have not measured but after looking at hundreds of MSS, this number seemed to be on the conservative side), times 5800 MSS = 1402 feet, almost as high as the Empire State Building (1454′).

Third, I added the number of versional witnesses. I have estimated somewhere between 15,000 and 20,000 versional witnesses, with the Latin making up for the bulk of these. In our many expeditions, I have seen numerous Coptic MSS, far more than I had thought were extant. And I have assumed c. 10,000 Latin MSS. If this number is as low as 8,000, that is offset by the more sumptuous medieval Latin MSS than their Greek counterparts. The depth, thus, would be larger than 2.9″, but I’m assuming 10,000 (perhaps too high) at 2.9″ depth (probably too low). Assuming that other versional witnesses are similar in size (though, since none of them is as early as the earliest Greek NTs, and thus not as fragmentary, they will tend to be fuller, with quite a number of them being very large), we come up with the following numbers:

20,800 total number of MSS (5800 Greek + 15,000 versions): = 5027 feet

25,800 total number of MSS (5800 Greek + 20,000 versions): = 6235 feet

This number is a bit smaller than I had estimated previously. I have adjusted my presentation in light of the new polling of the average MS depth. Incidentally, four stacked Empire State Buildings would be 5816 feet tall (and yes, I’m counting the antennae on top).

Bibliographical comparison. I tried to compare apples with apples: the NT in all handwritten copies compared to classical Greek works of all handwritten copies in any language. For the latter, I did not do any scientific count but gave a broad estimate based on selective data of a number of authors. 15 MSS seemed to be a generous estimate. That stack came to 3.625 feet tall, but I rounded it up to 4 feet.

Significance—this IS helpful and not misleading when properly used. Some of the comments on the Facebook discussion appear to have made many false assumptions—e.g., that our NT MSS are written on a scroll (or roll), that they are single-leaf MSS, or that I was counting Greek only. “When properly used”: In my lectures on this topic, I don’t use these figures in isolation. I offer four questions that need to be answered. The first question is pertinent to this discussion: How many variants are there? I use Peter Gurry’s estimate of 500,000 (Greek MSS only), but since he didn’t count nonsense readings and most spelling variants, when they are included the numbers are exponentially higher. In other words, I do not minimize the number of variants in the slightest. At this point in the lecture, many Christians tend to squirm in their seats; many others are rejoicing in their minds! Recovering the autographic wording appears to be hopeless.

Then I put things into perspective. The context of the number of MSS was simply that, as Richard Bentley argued three hundred years ago, the more MSS, the more variants. I also compared the date of the earliest NT fragments with the average earliest copies of Greco-Roman writings. Even the earliest copies of (just about) any Greco-Roman literature come many centuries after the autographs were written. Although only about 1% of our Greek MSS are complete NTs, the average size is well over 400 pages. I assume the same for versions.

Significance continued: Why specific numbers? I would argue strenuously that giving numbers in this context is quite helpful for most people. Some think abstractly and such numbers may seem meaningless. But I believe that many, if not most, think more concretely, especially in lay circles and on college campuses, when trying to get a handle on textual criticism. Grasping the topic in a one hour presentation is challenging enough without seeing concrete numbers! As an apt analogy, consider the Wong-Baker FACES® Pain Rating Scale. Created in 1983 to help children identify the intensity of pain on various parts of their body, this 1–10/happy-face-to-sad-face model soon mushroomed into an international standard for doctors across the globe—for adults as well as children. So it is with giving numbers on MSS. The analogy breaks down, however, because the pain index is completely relative. Logically, if the pain index is considered quite helpful for medical practitioners and their patients, in spite of its relativity, how much more so would the number of NT MSS be for those interested in textual matters? However, it would be misleading to give only the official number because of numerous caveats (see Jacob Peterson’s chapter, “Math Myths: How Many Manuscripts We have and Why More Isn’t Always Better,” in Myths & Mistakes). So, I give an estimate that is well within the ballpark of actual. And I show, century by century through the first millennium, how many MSS we have. I have tried to be very circumspect when dealing with the data, though of course in a popular lecture I dare not show my homework for fear of losing my audience!

The numbers, caveats, context, and other features I use in this lecture have been tweaked over the years, and the Facebook discussion has helped me refine them further. I’m sure some folks will quibble over what I have presented (textual critics are the most hyper-critical scholars I know!), but implications of indolence coupled with suggestions of sham estimates, without giving me the benefit of the doubt, seeing what I have said, or even contacting me about this (only one friend did, and that’s how I learned about the discussion), are not particularly helpful. A little more charity will go a long way.

[1] Note too that a number of kinds of witnesses, in particular certain types of lectionaries, that Gregory had counted were abandoned when Aland took over the numbering system. Hundreds more of these unregistered MSS exist. (Dozens are at the National Library of Greece alone, but we did not shoot them.) So, in a sense, the numbers could go both ways—to a degree. If we counted all these, the total would swell to well over 6000 MSS. If we didn’t count Gregory’s at all, the numbers would drop by 225, or just under c. 5600.